An update on this project: I really enjoy writing this newsletter and have gotten good feedback on it. But it takes time (you may have noticed that there were only three newsletters in the last 6 months). So I’m very pleased to announce I’ve received funding from Emergent Ventures and the go-ahead from Iowa State University to carve out a bit of time specifically for this project, at least for the next year. The plan is to release a newsletter like this one every other Tuesday.

After a year, I’ll re-assess things. If you want to help make this project a success, you can subscribe or tell other people about it. Thanks for your interest everyone!

----

It seems clear that a better understanding of the regularities that govern our world leads, in time, to better technology: science leads to innovation. But how long does this process take?

Two complementary lines of evidence suggest 20 years is a good rule of thumb.

A First Crack

James Adams was one of the first to take a crack at this in a serious quantitative way. In 1990, Adams published a paper titled "Fundamental Stocks of Knowledge and Productivity Growth" in the Journal of Political Economy. Adams wanted to see how strong was the link between academic science and the performance of private industry in subsequent decades.

Adams had two pieces of data that he needed to knit together. On the science side, he had data on the annual number of new journal articles in nine different fields, from 1908 to 1980. On the private industry side, he had data on the productivity of 18 different manufacturing industries over 1966-1980. Productivity here is a measure of how much output a firm can squeeze out of the same amount of capital, labor, and other inputs. If a firm can get more output (or higher quality outputs) from the same amount of capital and labor, economists usually assume that reflects an improved technology (though it can also mean other things). What Adams basically wanted to do was see if industries experienced a jump in productivity sometime after a jump in the number of relevant scientific articles.

The trouble is that pesky word “relevant.” Adams has data on the number of journal articles in fields like biology, chemistry, and mathematics, but that's not how industry is organized. Industry is divided into sectors like textiles, transportation, and petroleum. What scientific fields are most relevant to the textiles industry? To transportation equipment? To petroleum?

To knit the data together, Adams used a third set of data: the number of scientists in different fields that work for each industry. To see how much the textiles sector relies on biology, chemistry, or mathematics, he looked at how many biologists, chemists, and mathematicians the sector employed. That data did exist. If they employed a lot of chemists, they probably used chemistry; if they employed lots of biologists, they probably used biology, and so on. He weighted the number of articles in each field by the number of scientists working in that field to get a measure of how much each industry relies on basic science.

So now Adams has data on the productivity and relevant scientific base of 18 different manufacturing sectors. He expects more science will eventually lead to more productivity, but he doesn’t know how long that will take. If there was a surge in scientific articles in a given year, at what point would Adams expect to see a surge in productivity? If scientific insight can be instantly applied, then the surge in productivity should be simultaneous. But if the science has to work it's way through a long series of development, then the benefits to industry might show up later. How much later?

To come up with an estimate, Adams basically looked at how strong the correlations were for 5 years, 10 years, and 20 years. Specifically, he looked to see which one gives him the strongest statistical fit between scientific articles produced in a five-year span, and the productivity increase in a five-year span for industries that use that field's knowledge intensively. Of the time lags he tried, he found the strongest correlation was 20 years.

Adams’ study is an important first step, but recent work has largely validated Adams’ original findings.

A Bayesian Alternative

Nearly thirty years later, Baldos et al. (2018) tackled a similar problem with different data and a more sophisticated statistical technique. Unlike Adams, they focused on a single sector - agriculture. Like Adams, they had two pieces of data they wanted to knit together.

On the science side, a small group of ag economists has spent a long time assembling a data series on total agricultural R&D spending by US states and the federal government. Until recently, governments were a really big source of agricultural research dollars and those dollars can be relatively easily identified since they frequently flow through the department of agriculture or state experiment stations. The upshot is Baldos et al. have data on public sector agricultural R&D going back to 1908. Meanwhile, on the technology side, the USDA maintains a data series on the total factor productivity of US agriculture from 1949 to present day. So like Adams, they’re going to try and look for a statistical correlation between productivity and research. Unlike Adams, they’re going to use dollars to measure science and unlike Adams they’re going to focus on a single sector (and not a manufacturing sector).

To deal with the fact that we don’t know when research spending impacts productivity growth, they’re going to adopt a Bayesian approach. How long does it take agricultural spending to influence productivity? They don’t know, but they make a few starting assumptions:

They assume impact will follow an upside “U” shape. The idea here is that new knowledge takes time to be developed into applications, and then it takes time for those applications to be adopted across the industry. During this time, the impact of R&D done in one year on productivity in subsequent years is rising. At some point - maybe 20 years later - the impact of that R&D on productivity growth hits its peak. But after that point, the R&D becomes less relevant, so that it’s impact on productivity growth in subsequent years declines. Eventually, the ideas become obsolete and have no additional impact on increasing productivity.

They assume the impact of R&D on productivity after 50 years is basically zero.

They assume the peak impact will occur sometime between 10 and 40 years, with the most likely outcome somewhere around 20. This assumption is based on earlier work, similar in spirit to Adams.

Given these estimates, they are basically assuming there’s a bunch of different possible distributions for the relationship between R&D spending and productivity. They assume the most likely distribution is one peaking around 20 years, and the farther the distribution is from that, the more unlikely. They then use Bayes’ rule to update their beliefs, given the data on R&D spending and agricultural productivity. It will turn out that some of those distributions fit the data quite well, in the sense that if that distribution of R&D impacts is true, then the R&D spending data matches productivity pretty well. Others fit quite poorly. We update our beliefs after observing the data, increasing our belief in the ones that fit the data well, and decreasing our beliefs in the ones that don’t.

They find, with 95% probability, the best fitting distribution indicates science impacts productivity the strongest after 15-24 years, with the best point estimate around 20 years.

Evidence from Citations

So whether for manufacturing or agriculture, using slightly different data and statistical techniques, we find a correlation between productivity growth and basic science that is strongest around 20 years. But at the end of the day, both methods look for correlations between two messy variables separated in time by decades. It’s hard to make this completely convincing.

A cleaner alternative is to look at the citations made by patents to scientific articles. If we assume patents are a decent measure of technology (more on that later) and journal articles a decent measure of science, then citations of journal articles by patents could be a direct measure of technology’s use of science. In the last few years, data on patent citations to journal articles has become available at scale, thanks to advances in natural language processing (see Marx and Fuegi 2019, Marx and Fuegi 2020, and the patCite project).

Do citations to science actually mean patented technologies use the science? Maybe the citations are just meant to pad out the application? There's a lot of suggestive evidence that they do. For one; they say they do. Arora, Belenzon, and Sheer (2017) use an old 1994 survey from Carnegie Mellon about the use of science by firms. Firms that cite a lot of academic literature in their patents are also more likely to report in the survey that they use science in the development of new innovations.

There's also a variety of evidence that patents that cite scientific papers are different from patents that don't. Watzinger and Schnitzer (2019) scan the text of patents, and find patents that cite science are more likely to include combinations of words that have been rare up until the year the patent was filed. This suggests these patents are doing something new; they don't read like older patents. Maybe they are using brand new ideas or insights that they have obtained from science? They also find these patents tend to be worth more money. Ahmadpoor and Jones (2017) find these science-ey patents also tend to be more highly cited by other patents.

So let’s go ahead and assume citations to journal articles are a decent measure of how technology uses science. What do we learn from doing that?

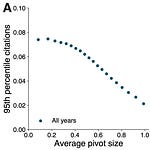

Marx and Fuegi (2020) apply natural language processing and machine learning algorithms to the raw text of all US patents, to pull out all citations made to the academic literature. They find about 29% of patents make some kind of citation to science. More importantly for our purposes, for patents granted since 1976, the average gap in time between a patent’s filing date and the publication date of the scientific articles it cites is 17 years.

This is pretty close to the twenty years estimated using the other techniques; especially when we consider that the date a patent is filed is not necessarily the date it begins to affect productivity (which is what the other studies were measuring). Any new invention that seeks patent protection might take a few years before it is widely diffused and begins to affect productivity. In that case, a twenty year estimate is pretty close to spot on.

Winding paths from science to technology

Of course, there is variation around that 17 year figure. Ahmadpoor and Jones (2017) found that the average shortest time gap between a patent and a cited journal was just 7 years. And on the other side, there are also longer and more indirect paths from science to technology than direct citation. Ahmadpoor and Jones cite Riemannian geometry as an example. Riemannian geometry was developed by Bernhard Riemann in the 19th century as an abstract mathematical concept, with little or no real world application until it was incorporated in Einstein's general theory of relativity. Later, those ideas were used to develop the time dilation corrections in GPS satellites. That technology, in turn, has been useful in the development of autonomous vehicles. In a sense then, patents for self-driving tractors owe some of their development to Riemannian geometry, though this would only be detectable by following a long and circuitous route of citation.

When these longer and more circuitous chains of citation are followed, Ahmadpoor and Jones find that 61% of patents are "connected" to science; patents that do not directly cite scientific articles may cite patents that do, or they may cite patents that cite patents that do, and so on. About 80% of science and engineering articles are "connected" to patents, in the sense that some chain of citation links them to a patent. (Technical note: this probably understates the actual linkages, because it is based only on citations listed on a patent’s front page; Marx and Fuegi 2020 show additional citations are commonly found in a patent’s text).

The authors compute metrics for the average "distance" of a scientific field from technological application and these distance metrics largely line up with our intuitions. For example, material science and computer science papers are fields where we might expect the results to be quite applicable to technology, and indeed, they tend to be among the closest to patents - just 2 steps away from a patent (cited by a paper that is, in turn, cited by a patent). Atomic/molecular/chemical physics papers would also seem likely to have applications, but only after more investigation, and they tend to be 3 steps removed from technology (cited by a paper that is cited by a paper that is cited by a patent). And as we might expect, the field that is farthest from immediate application is pure mathematics (five steps removed). As expected, these longer citation paths also take longer when measured in time. The average gap between the shortest path from a math paper to a patent is more than 20 years.

On the other side of the divide, they also compute the average distance of technology fields to science, and these also align with our intuitions. Chemistry and molecular biology patents would probably be expected to rely heavily on science, and they tend to be slightly more than 1 step removed from science (most directly cite scientific papers). Further downstream, electrical computers tend to be two steps removed (they cite a patent that cites a scientific article) and ancient forms of technology like chairs and seats tend to be the farthest from science (five steps removed). The average gap between the shortest citation path from a chair/seat patent and a scientific article is also over 20 years.

Converging Evidence

All told, it’s reassuring that two distinct approaches arrive at a similar figure. The patent citation evidence is reasonably direct - we see exactly what technology at what date cites which scientific article, as well as the date that article was published. This line of evidence finds an average gap of about 17 years, with plenty of scope for shorter and longer gaps as well. The trouble with this evidence is that patents are far from a perfect measure of technology. Lots of things do not ever get patented, and lots of patents are for inventions of dubious quality.

For that reason, it’s nice to have a different set of evidence that does not rely on citations or patents at all. When we try to crudely measure “science”, either by counting government dollars or scientific articles, we can detect a correlation with increases in science and the productivity of industries expected to use it 20 years later - again, with plenty of scope for shorter and longer lags as well.

If you thought this was interesting, you may also enjoy these posts:

Share this post